LEAD-AI User Survey | February 2026 | ESCP Berlin, Halmstad University, Haikara, Europroject

Everyone talks about AI. But who is actually using it, how are they learning, and what is holding them back? A new cross-European survey by the LEAD-AI project went beyond the headlines to ask 70 working professionals (educators, cybersecurity experts, entrepreneurs, managers, and administrative staff across Germany, Denmark, France, and beyond) about their lived experience with artificial intelligence. The results reveal a workforce that is enthusiastic, self-driven, and largely unsupported.

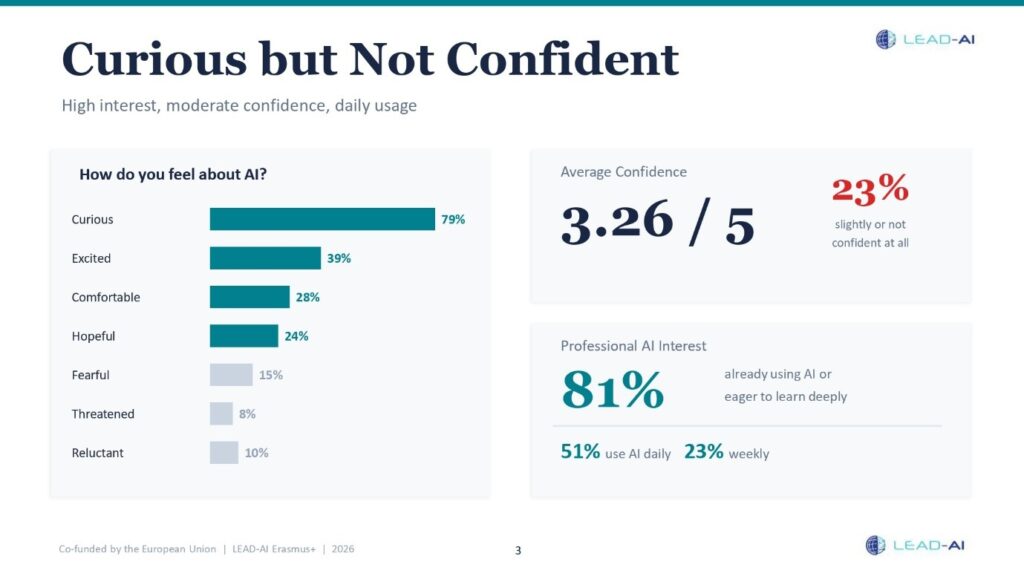

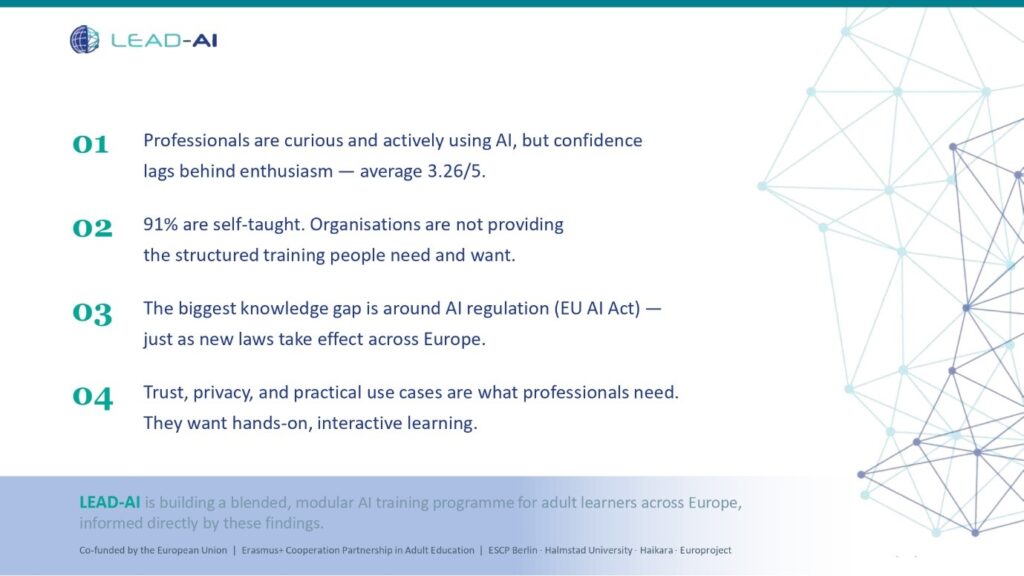

Most People Are Curious – But Confidence Lags Behind

The dominant emotion professionals associate with AI is not fear – it is curiosity. A striking 79% described themselves as curious, while 39% said they feel excited. Over half (51%) already use AI tools daily, and another 23% do so weekly. Yet when asked to rate their confidence, the average score landed at just 3.26 out of 5. Nearly one in four respondents (23%) said they feel only slightly confident or not confident at all. In other words: people are jumping in, but many are swimming without a lifeguard.

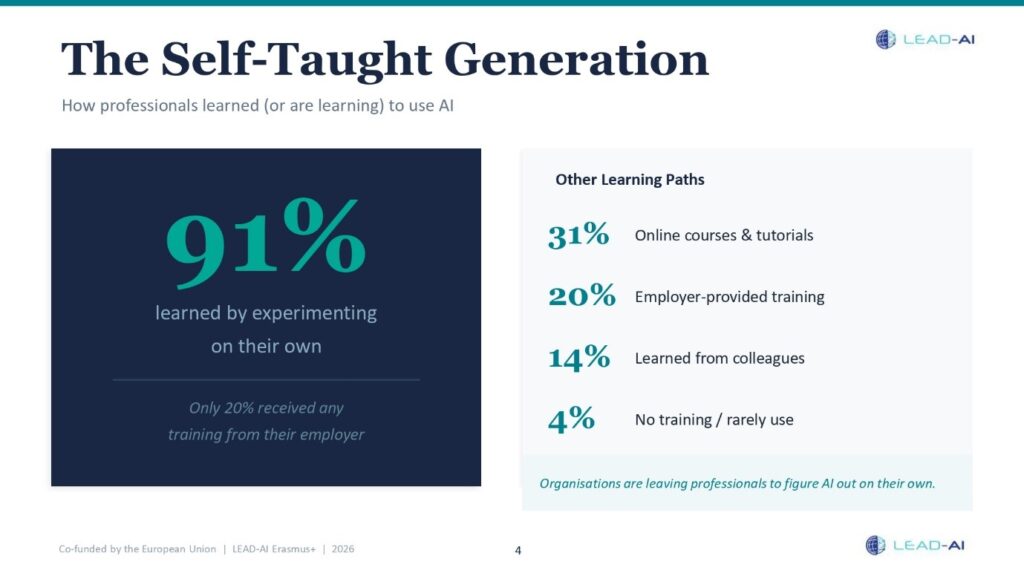

The DIY AI Generation

Perhaps the most striking finding is how people learned to use AI in the first place: 91% said they taught themselves by experimenting on their own. Only one in five (20%) received any training from their employer. Just 14% learned from colleagues, and 31% turned to online courses and tutorials. The message is clear: professionals are not waiting for their organisations to catch up. They are figuring it out alone: trial and error, YouTube videos, late nights with ChatGPT. This is remarkable initiative, but it also means many are building habits without structured guidance on accuracy, ethics, or best practices.

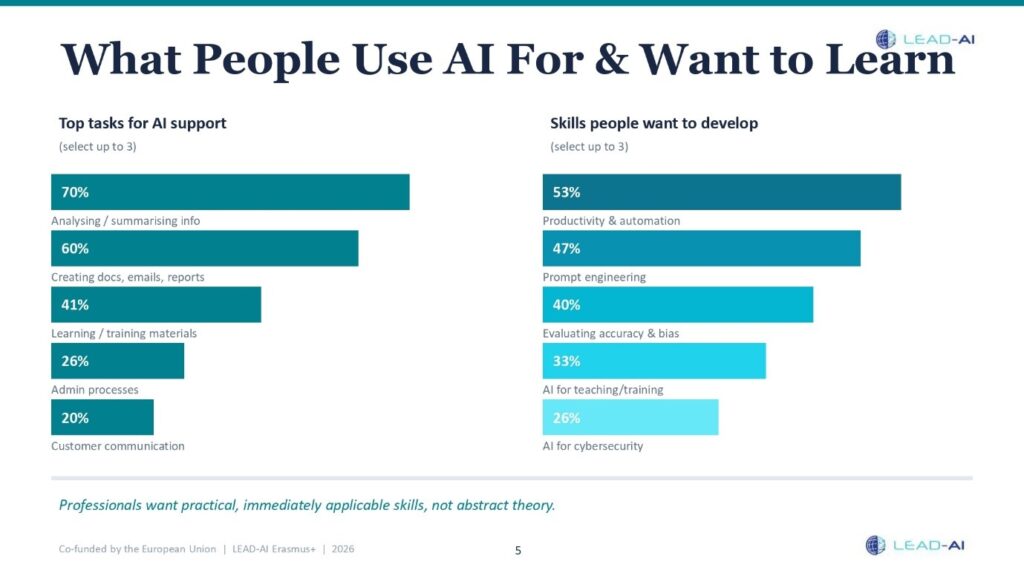

What People Actually Use AI For – And Want to Learn

Respondents are not using AI for exotic, cutting-edge applications. The most common tasks are thoroughly practical: analysing and summarising information (cited by 70% of respondents), creating documents, emails, or reports (60%), and building learning or training materials (41%). When asked which skills they most want to develop, the top three were AI for productivity and automation (53%), prompt engineering (47%), and evaluating AI accuracy and reliability (40%). These are professionals who want to work smarter, not just differently.

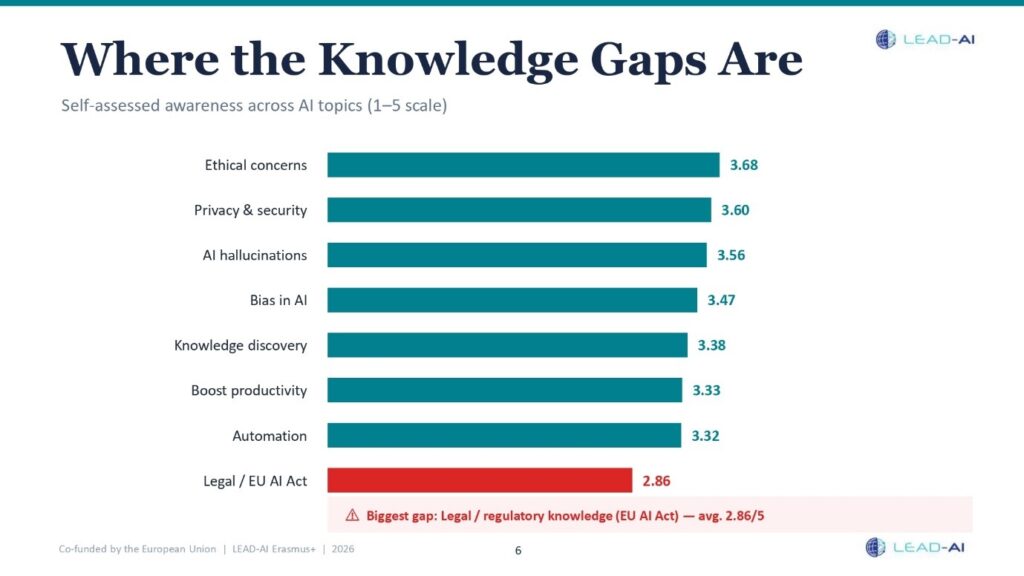

The Regulatory Blind Spot

Self-assessed knowledge across AI topics was moderate overall, averaging between 3.3 and 3.7 out of 5. But one area stood out as a clear weak point: legal and regulatory knowledge (including the EU AI Act) scored just 2.86 out of 5, the lowest of any topic tested. With significant AI regulation now being enforced across Europe, this gap is both timely and concerning. Professionals know about hallucinations and bias in the abstract, but many have little understanding of the rules that will govern how AI can be used in their workplaces.

Trust Is the Real Barrier

When asked the open-ended question “What is the single biggest thing you need to feel confident using AI?” the answers were candid and often personal. Some wanted technical reassurance: “A reliable way to detect hallucinations.” Others needed institutional clarity: “My employer must establish a policy so I know which tools I am allowed to use.” Several were concerned about data: “Knowing where the input data is stored and how it’s used.” And some spoke to a deeper aspiration: “That it’s helping me improve my own skills or cognition, not replacing them.”

For managers and entrepreneurs specifically, 60% named a lack of skills as the biggest organisational obstacle to AI adoption, followed by resistance to change and legal uncertainty (both 30%). Cybersecurity professionals flagged the reliability of AI outputs (60%) and concerns about legal admissibility (53%), understandable in a field where wrong answers can have serious consequences.

What Kind of Training Do People Want?

When it comes to learning, professionals showed a strong preference for interactive online courses (chosen by half of respondents), followed by extra time for hands-on practice (30%) and face-to-face training (29%). Access to specific tools and templates also ranked highly. While formal accreditation was not the primary driver for most respondents, certificates still hold value as a way to recognize participation and achievement. Overall, participants were more strongly motivated by gaining practical skills they can apply immediately than by earning credentials alone. The takeaway? People are looking first and foremost for relevant, hands-on learning, with certification serving as a meaningful complement to that experience.

What This Means for Europe’s AI Skills Gap

This survey paints a picture of a motivated but under-served workforce. Professionals across very different sectors (from classrooms to forensics labs to small business offices) are actively engaging with AI. But they are doing so largely without formal training, clear workplace policies, or structured support. The opportunity is enormous: with the right programme (hands-on, modular, and role-specific) these professionals can move from curious experimenters to confident, responsible AI users.

The LEAD-AI project is building exactly that. Using these survey results as a foundation, the consortium is developing a blended AI training programme covering fundamentals, prompt engineering, ethical use, and sector-specific applications, all hosted on an open-access e-learning platform. Because the real question is no longer whether professionals want to use AI.

They already do. The question is whether we’ll help them do it well.